International

Select your role

AI: Our initial investment thinking

Are we on the brink of one of those huge advances for humankind that changes everything? Across capabilities, we assessed the potential impact of artificial intelligence.

20 Jul 2023

15 minutes

Eric Opara

Nikhil Solanki

Anton Du Plooy

Context

Ninety One, in a Thematic Macro session in May, held a horizon-scanning exercise about the potential impact of AI. This session was prepared on a cross-capability basis to maximise input and collaboration. We lay out here the talking points which fashion Ninety One’s current thinking on AI and investing. Given the speed of AI development, this article is inherently incomplete. Its success is more a function of whether we are asking the right questions than whether we have the right answers. Needless to say, we expect our thinking to rapidly evolve.

Chapters

A brief history

AI is a broad field of study. This paper focuses on AI broadly as programmes or applications that can produce an output that is very human-like, conventionally known as generative AI. The intelligence of these systems lies not only in producing human-like outputs but also using machine learning techniques for continuous training and improvement without needing human input.

The novelty of ChatGPT has rocketed AI to the top of research agendas, but, as the saying goes, it takes a long time to become an overnight success. It’s 73 years since Alan Turing invented the ‘Turing test’ which set an early benchmark for AI to aspire to: display intelligent behaviour that is indistinguishable from a human’s.

Stepping back, AI has seen a number of evolutions: from early enthusiasm in the 1950s as a general problem solver that could approach puzzles in a similar way to humans i.e., via an order of subgoals, to the ELIZA model in the ‘60s that could create sentences that sounded very human-like. Then in the ‘70s knowledge based systems proved successful compared to junior doctors in medical examinations. In the ‘80s and ‘90s, IBM’s Deep Blue grabbed headlines when it beat Gary Kasparov in chess.

Then there was a so-called ‘AI winter’ for the next couple of decades as researchers struggled to make significant breakthroughs. This chart from Harvard shows the timeline: one conclusion you could draw is that historically AI has made progress, but in leaps, and with frequent periods where optimism has given way to pessimism. In other words, progress wasn’t inexorable in the past and we should not assume it is inexorable today.

Figure 1: Artificial intelligence timeline

Source: Harvard University. Please note this has been redrawn by Ninety One.

To help understand what AI is, we like the way Dataconomy, a data science hub, splits AI into three broad conceptual categories:

01 Artificial narrow intelligence

- Also known as weak AI. Goal-oriented systems with limited applications. This includes things like natural language processing (NLP) and the chatbots that are becoming more commonplace.

- Most AI has limited memory, where machines use large volumes of data for deep learning. With this deep learning, programmes offer personalised AI experiences like virtual assistants or search engines that store your data and personalise your future experiences. Apple’s SIRI is an example of narrow AI.

02 Artificial general intelligence

- Far stronger and more flexible. An advanced form of artificial narrow intelligence.

- Theoretical at this stage, this is a computer model that can perform a multitude of ‘human’ cognitive tasks. Recognising images, sounds as well as solving logic problems and data-pattern recognition. It should be as good or better than humans at solving problems in many areas requiring intelligence.

03 Artificial super intelligence

- In some ways the end game. A computer can be turned to a plethora of tasks and perform them far ahead of what a human would be capable of doing.

ChatGPT is an advanced form of artificial narrow intelligence. Commonly referred to as ‘generative AI’, it represents a step change in capabilities within the category of narrow artificial intelligence. Generative AI can take a given set of inputs and produce sophisticated outputs, not just in text but also as images, audio and synthetic data. This is starting to push the boundaries between narrow and general intelligence. But for now, artificial general intelligence remains a theoretical objective.

AI: Initial investment thinking

A brief history

Historically AI has made progress, but in leaps, and with frequent periods where optimism has given way to pessimism.

AI is a broad field of study. This paper focuses on AI broadly as programmes or applications that can produce an output that is very human-like, conventionally known as generative AI. The intelligence of these systems lies not only in producing human-like outputs but also using machine learning techniques for continuous training and improvement without needing human input.

The novelty of ChatGPT has rocketed AI to the top of research agendas, but, as the saying goes, it takes a long time to become an overnight success. It’s 73 years since Alan Turing invented the ‘Turing test’ which set an early benchmark for AI to aspire to: display intelligent behaviour that is indistinguishable from a human’s.

Stepping back, AI has seen a number of evolutions: from early enthusiasm in the 1950s as a general problem solver that could approach puzzles in a similar way to humans i.e., via an order of subgoals, to the ELIZA model in the ‘60s that could create sentences that sounded very human-like. Then in the ‘70s knowledge based systems proved successful compared to junior doctors in medical examinations. In the ‘80s and ‘90s, IBM’s Deep Blue grabbed headlines when it beat Gary Kasparov in chess.

Then there was a so-called ‘AI winter’ for the next couple of decades as researchers struggled to make significant breakthroughs. This chart from Harvard shows the timeline: one conclusion you could draw is that historically AI has made progress, but in leaps, and with frequent periods where optimism has given way to pessimism. In other words, progress wasn’t inexorable in the past and we should not assume it is inexorable today.

Figure 1: Artificial intelligence timeline

Source: Harvard University. Please note this has been redrawn by Ninety One.

To help understand what AI is, we like the way Dataconomy, a data science hub, splits AI into three broad conceptual categories:

01 Artificial narrow intelligence

- Also known as weak AI. Goal-oriented systems with limited applications. This includes things like natural language processing (NLP) and the chatbots that are becoming more commonplace.

- Most AI has limited memory, where machines use large volumes of data for deep learning. With this deep learning, programmes offer personalised AI experiences like virtual assistants or search engines that store your data and personalise your future experiences. Apple’s SIRI is an example of narrow AI.

02 Artificial general intelligence

- Far stronger and more flexible. An advanced form of artificial narrow intelligence.

- Theoretical at this stage, this is a computer model that can perform a multitude of ‘human’ cognitive tasks. Recognising images, sounds as well as solving logic problems and data-pattern recognition. It should be as good or better than humans at solving problems in many areas requiring intelligence.

03 Artificial super intelligence

- In some ways the end game. A computer can be turned to a plethora of tasks and perform them far ahead of what a human would be capable of doing.

ChatGPT is an advanced form of artificial narrow intelligence. Commonly referred to as ‘generative AI’, it represents a step change in capabilities within the category of narrow artificial intelligence. Generative AI can take a given set of inputs and produce sophisticated outputs, not just in text but also as images, audio and synthetic data. This is starting to push the boundaries between narrow and general intelligence. But for now, artificial general intelligence remains a theoretical objective.

Why all the hype (now)?

Excitement is likely running well ahead of practical implementation

Excitement is likely running well ahead of practical implementation as generative AI streaks towards the top of the Gartner Hype Cycle, which identifies the must-know innovations expected to drive high or even transformational benefits. Why? Because the recent step change in AI capabilities has captured imaginations and given rise to thousands of potential applications and productivity gains. However, these implementations will take time. Innovators must first experiment with the technology and assess how it applies to societal needs. This creates potential applications that will deliver on AI’s promise.

Figure 2: Hype cycle for artificial intelligence, 2022

Source: Gartner, as at July 2022. Please note this has been redrawn by Ninety One.

What created this step change? The answer lies in a pioneering form of machine learning called ‘Transformers’. Introduced in 2017, the Transformer is a novel type of deep learning model architecture similar to other language models. Like other language models, Transformers take some form of input and predict what the output should be. This allows the model to weigh the importance of different parts of the input, giving it the ability to maintain context and capture relationships within the data, thereby revolutionising how machines interpret sequences. These models when large enough and trained on enough data are able to exhibit intelligence at a human level.

Advances in computing power combined with better training methodologies have driven the exponential growth in AI capabilities. To put that in context, between 2010 and 2022 the so-called training compute metric has grown by a factor of 10 billion, with a doubling rate of around 5-6 months. Training compute refers to the number of computational resources (such as CPU, GPU, and memory) that are required to train an AI model. It is measured in FLOPS (floating-point operations per second).

Another way to bring to life the pace at which generative AI is accelerating is a comparison between ChatGPT v3.5 released in Q4 2022 and ChatGPT 4.0 released in March 2023. The latter release showed strong improvement over a range of professional exams. For example, when shown the Bar Exam (for law), version 4 scored over 80% while 3.5 was sub 20%.

While the GPT series has captured attention with its powerful communication skills, the timeline in Figure 3 tracks large language models (LLMs) as they were launched. From just one in 2019 to a new launch more than once a month in 2023. Increasingly smaller open source models are being built on curated datasets that provide good enough outcomes within bounded use cases, while avoiding the compute resource intensity of LLMs. Taking the proliferation of LLMs together with advances in the open-source community, a likely implication is that there will be little competitive advantage to be gained from the models themselves. Rather, the competitive advantages will flow to those capable of leveraging the technology to create a sought after use case.

Figure 3: Timeline of existing large language models

Source: A Survey of Large Language Models (arxiv.org). Timeline of existing large language models (having a size larger than 10B) in recent years. The timeline was established mainly according to the release date (e.g. the submission date to arXiv) of the technical paper for a model. If there was not a corresponding paper, The surveyors set the date of a model as the earliest time of its public release or announcement. Please note this has been redrawn by Ninety One.

Although the hype might be ahead of potential applications, we can expect rapid expansion in the tasks AI is expected to perform. This research from Sequoia Capital published in September last year illustrates how models might progress and its associated applications.

Figure 4: Timeline for how fundamental models might progress

| Pre-2020 | 2020 | 2022 | 2023? | 2025? | 2030? | |

|---|---|---|---|---|---|---|

| Text |

Spam detection Translation Basic Q&A |

Basic copy writing First drafts |

Longer form Second drafts |

Vertical fine tuning gets good (scientific papers, etc) | Final drafts better than the human average | Final drafts better than professional writers |

| Code | 1-line auto-complete | Multi-line generation |

Longer form Better accuracy |

More languages More verticals |

Text to product (draft) | Text to product (final), better than full-time developers |

| Images |

Art Logos Photography |

Mock-ups (product design, architecture, etc.) | Final drafts (product design, architecture, etc.) | Final drafts better than professional artists, designers, photographers | ||

| Video / 3D / Gaming | First attempts at 3D/video models | Basic/first draft videos and 3D files | Second drafts |

AI Roblox Video games and movies are personalised dreams |

| Large model availability: | First attempts | Almost there | Ready for prime time |

Source: Sequoia Capital, as at September 2022.

The research explains that ‘Text’ is most advanced, but models are still used to iterate or produce first drafts. Perhaps by 2030, models will produce the final drafts. AI for ‘Code generation’ will have a big impact on the productivity of developers. By 2030, AI converting plain text to programming languages could create better code than full-time developers. It will also open-up programming to non-developers. Using AI for ‘Images’ is a more recent phenomenon but has been very popular to share on social media. This link to NVIDIA CEO Jenson Huang giving the keynote at Taiwan’s Computex event, ‘The next AI moment is here’ shows the potential for images, video and 3D gaming (watch from just after the four minute mark).

Investment trends and the AI stack

The level at which companies are investing in AI has grown at a compounded rate of 30% for the last five years.

The level at which companies are investing in AI has grown at an annual compounded growth rate of 30% for the last five years. More than five times the number of venture capital (VC) deals were completed in 2022 compared to five years ago and the value of those deals was more than ten times higher. The direction is clear, and this has happened despite a slowdown in VC funding more generally. Most private investment comes from companies in the US. Private investment in China is about half that of the US and then Europe is a much smaller percentage.

The large-platform tech companies are overrepresented. Two big step changes are visible in the big US tech companies. In 2017, 2018 the likes of Microsoft, Alphabet and Meta doubled capex, a significant portion of which is directed at AI. Then ramped up again in 2022-2023.

Figure 5: Aggregate capex (US$bn)

Source: Ninety One, Bloomberg. (AWS refers to Amazon Web Services).

To better understand the types of companies competing in the AI landscape, it is helpful to think about the emergent AI stack which we simplify into three categories:

- Applications

The apps that we use that are powered by the AI models. - Models

The AI models themselves. - Infrastructure

The infrastructure the models run on.

To help dissect the three categories in the AI stack, we can define ChatGPT as the app, GPT-3 or GPT-4 as the models and most models run on some form of cloud computing like AWS or Azure. This chart shows where a range of companies are competing for AI market share:

Figure 6: The emergent AI stack

| Apps |

|---|

|

| Models |

|

| Infrastructure |

|

Source: Ninety One.

Much of the hype has been centred on the top of the stack and especially examples like ChatGPT running on GPT-3 or 4. However, for investors it is not clear whether economic value will accrue to the apps or the models they are running on. It might be in a couple of years’ time that Google’s Bard overtakes ChatGPT or that AI embedded in Microsoft’s Bing becomes more popular.

However, further down the stack, in the infrastructure that makes AI possible, there are examples of companies with clearer competitive advantages. They will be the major players in AI over the long term.

Figure 7: The bottom of the emergent AI stack

| Infrastructure |

|---|

| Cloud platforms |

|

| Computer hardware |

|

| Infrastructure behind the infrastructure |

| Foundries/Memory |

|

| Capital equipment/EDA |

|

Source: Ninety One.

At the bottom of the stack, there are different types of infrastructure. AI models run on cloud computing and there are effectively three major companies with strong established positions: Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform.

The computing hardware powering cloud platforms is dominated by NVIDIA, which produces the raw computing power used to train the AI models. This is behind the recent surge in NVIDIA’s share price and is one of the purest ways to invest in the AI trend. However, it is possible to step further back and look at the infrastructure behind the infrastructure.

NVIDIA design the hardware, but it is Taiwan Semiconductors (TSMC) which manufactures NVIDIA’s hardware, though TSMC is exposed to several different revenue streams related to chip production. Then you can take one further step back, with ASML, which build the machines installed within the TSMC plants and effectively have a monopoly on the equipment used to make AI chips. Or there is software provider Cadence, which NVIDIA relies on for the design process of its hardware.

The question then becomes, how big is the AI prize? Using NVIDIA as the example, in 2015 the company was earning revenue of c.US$5 billion, with c.US$340 million from the data centre business. Now in 2023, the company earns c.US$27 billion, of which c.US$15 billion was from the data centre business, and this number is likely to double again in the next few years. This shows AI has been a secular driver in the data centre business and NVIDIA specifically for some time now.

Source: Nvidia’s SEC filing.

This is not a buy, sell or hold recommendation for any particular security.

Case study: the impact of AI on web search

If Bing’s Chat GPT integration helps them take just a 1% share from Google, it could generate around USD 2 billion in annual revenue.

Advertising on web search platforms generates massive revenues. Google has a US$170 billion search business and when Microsoft launched ChatGPT, it announced integrating the app with Bing and Edge, Microsoft’s own search engines. Just taking a 1% share from Google could generate around US$2 billion in annual revenue. Microsoft was clear it wanted to go after Google’s dominance in web search and AI was going to help the effort. This was a red alert for Google. Google responded when the company launched its own AI app, Google Bard, but saw its stock drop when Google Bard offered up a wrong response.

The task for Microsoft is not straightforward and hence negative sentiment toward Google’s parent, Alphabet, might be overdone. The Google ecosystem attracts four billion users every day and is reinforced by its operating system in mobile devices (Android). Add to this Google’s panoply of distribution agreements, like the one with Apple, and you can surmise that changing default user behaviour is not an easy task. Furthermore, Google estimates less than 2% of searches are currently monetised. And these are not typically informational searches but linked more to shopping. We can also expect Google to catch up quickly given the company’s potential as an AI leader.

Since February 2023, when Microsoft integrated ChatGPT into Bing and Edge, these search engines have not meaningfully increased market share. What has been interesting is that users going directly to ChatGPT vs google.com or bing.com have increased, showing a one to two percent gain. Thus far, this indicates that ChatGPT users are going direct, which warrants ongoing analysis.

What would be more meaningful is the announcement from Samsung in April 2023. The company is considering swapping Google as its default search engine with Bing. Samsung earns around US$3 billion in its distribution agreement with Google. This is the share of revenue Samsung receives when Google is used on its devices. There is not a lot of information on the potential swap at this stage, but it does have pros and cons for Samsung. A positive effect would be offering a differentiated product versus other smartphone providers powered by Android. However, what if that differentiated experience is worse? Secondly, would the new revenue share agreement stack up to what is a relatively certain revenue stream from the existing arrangement with Google?

An even bigger question is Apple. Google pays Apple to be the default search engine in Safari and the MAC iOS. The distribution agreement between Google and Apple is multiple times larger at circa US$20 billion. There is not a lot of information available about this agreement, but it is believed the renewal is due at the end of this year. Revenues from Google being used on Apple devices cover 27% in earnings before interest and tax for Google and around 14% of Apple’s earnings before interest and tax. One likely implication for these and other renewal talks is that Google will likely need to pay a higher percentage of revenues from their distribution agreements.

Worth considering: will AI change the nature of search with a different interface and new ways for search to be monetised? If we’re no longer going to receive a list of links, sponsored links and ads how will this work? If AI-powered search engines are instead returning answers, web advertising will change and could be a major disruptor.

No representation is being made that any investment will or is likely to achieve profits or losses similar to those achieved in the past, or that significant losses will be avoided.

This is not a buy, sell or hold recommendation for any particular security. For further information on specific portfolio names, please see the Important information section.

Macro observations

It is generally accepted that AI could transform productivity and economic growth.

It is generally accepted that AI could transform productivity and economic growth. Democratisation of knowledge, speedier decision making, and new products, services and industry will all generate productivity gains. These gains, however, may not be equitable. The starting knowledge base for new technology is an important driver of diffusion. We may well see this again with AI. And given the starting point in developed markets, we could see further bifurcation in economic value whereby the rich get richer, and the poor get poorer. This is a theme we have seen before when parts of the economy are digitised rendering certain jobs obsolete.

Though there is a great deal more heat than light at present, we think it fair to say that AI will lead to a range of jobs and industries losing relevance with significant impact on the labour market. This does not necessarily play out as unemployment, but as labour market polarisation, and gains to capital over labour. The speed at which these impacts will manifest will depend on the level and pace of investment, how governments respond with regulation and how democratised the technology becomes.

When assessing potential impacts on productivity gains and inflation, one obvious reference is Baumol’s cost disease theory. The theory posits somewhat counterintuitively that industries making the greatest productivity gains see their share of GDP fall over time. This is because productivity gains are inherently deflationary. Those industries or services that are more stagnant from a productivity perspective see their share of GDP rise through time even if not through volume but the price dynamic. Healthcare has been a good example of this where efficiency gains have been limited but costs have been rising. Using this idea, AI may increase its capital share within the economy as it is applied to a wider range of occupations and pursuits, but it may be that AI underwhelms in terms of impact on GDP numbers. This chimes with the disagreement among economists around the lack of impact from a wider digital transformation. Economist, Robert Solow’s famous line that the computer age is everywhere except for the statistics comes to mind.

The diffusion of new technology, despite the hype, tends to be slow and gradual. Yes, we will see a stream of headline grabbing novel applications, however the true gains are achieved overtime when new AI first systems are created. Like how the true power of the internet was unleashed overtime when digital native companies, unencumbered by legacy businesses built applications from the ground up. Different countries will be better at absorbing the productivity gains from AI than others. Arguably the more existing technology that a country has, the better it can adopt new technological developments. This is the hypothesis behind a recent McKinsey survey that found in 75% of AI-adopting firms that their existing knowledge base was relied upon to do it.

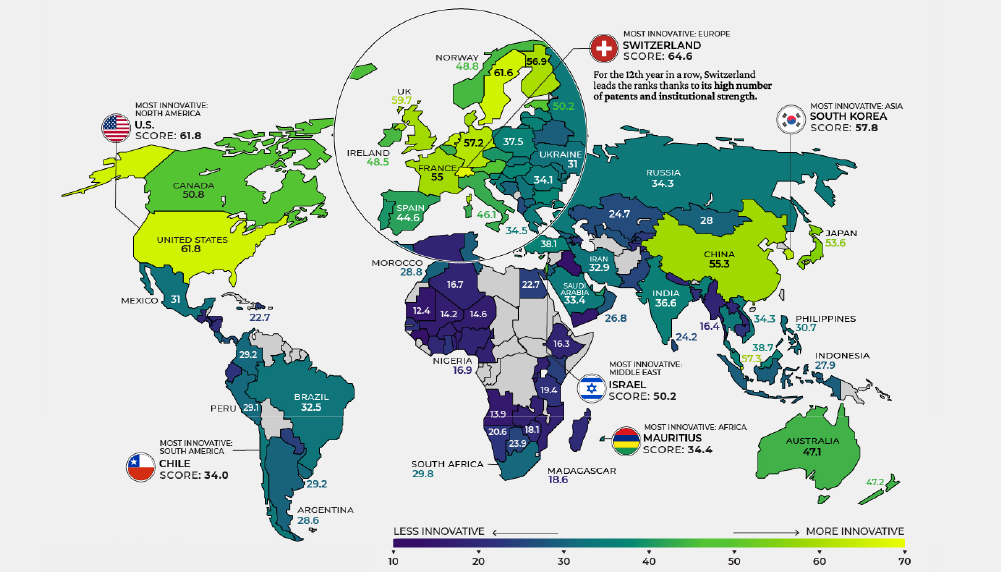

Which countries might have most success? There is something called the Global Innovation Index that looks at seven distinct categories which together summarise how well a country absorbs new innovations and technologies. The categories are business diversification, human capital and research institutions, infrastructure, market sophistication, knowledge, technology outputs and creative outputs. The US scores very well, but interestingly so does China.

Figure 8: Global Innovation Index 2022

Source: Visual Capitalist, as at December 2022.

Among companies, there is a feature that is likely to continue. Those firms that are among the top 5% of technology adopters will increase their market share relative to the other 95% over time. This happens across manufacturing and services and means once you establish your position on the technology frontier, that becomes a very strong part of your moat. A self-perpetuating advantage. This however has implications for diffusion and means those countries working harder to counterbalance this dynamic are likely to have a better aggregate outcome than those allowing it to take hold.

There have been several reports on the overall impact of generative AI on the labour force. A widely distributed research report from OpenAI suggests 80% of the US workforce could see 10% of their work tasks affected by the introduction of large language models. It goes on that 19% of workers may see 50% of their tasks impacted. Unlike automation however, which has replaced large swathes of blue-collar work, there is far greater potential for AI to impact higher-skilled occupations. This is what comes from the democratisation of knowledge. A consultant doctor for example derives their value from 25 years of experience and having seen many variations and treatment outcomes from their field. Through the democratisation of knowledge, a doctor with three to five years of experience may well be able to perform at the same level, at least at the diagnostic stage. This could vastly reduce consultant waiting lists.

Information processing industries have the highest exposure while manufacturing, agriculture and mining demonstrate the lowest exposure. The following tables provide an early look at occupations most at risk from generative AI and large language models. The one on the left is human made while the one on the right is the output from AI itself based on certain parameters. Ultimately the outcomes are quite similar.

| Group | Occupations with highest exposure | % exposure |

|---|---|---|

| Human α | Interpreters and Translators | 76.5 |

| Survey Researchers | 75.0 | |

| Poets, Lyricists and Creative Writers | 68.8 | |

| Animal Scientists | 66.7 | |

| Public Relations Specialists | 66.7 |

| Group | Occupations with highest exposure | % exposure |

|---|---|---|

| Model α | Mathematicians | 100.0 |

| Correspondence Clerks | 95.2 | |

| Blockchain Engineers | 94.1 | |

| Court Reporters and Simultaneous Captioners | 92.9 | |

| Proofreaders and Copy Markers | 90.9 |

| Human β | Survey Researchers | 84.4 |

| Writers and Authors | 82.5 | |

| Interpreters and Translators | 82.4 | |

| Public Relations Specialists | 80.6 | |

| Animal Scientists | 77.8 |

| Model β | Mathematicians | 100.0 |

| Blockchain Engineers | 97.1 | |

| Court Reporters and Simultaneous Captioners | 96.4 | |

| Proofreaders and Copy Markers | 95.5 | |

| Correspondence Clerks | 95.2 |

| Human ζ | Mathematicians | 100.0 |

| Tax Preparers | 100.0 | |

| Financial Quantitative Analysts | 100.0 | |

| Writers and Authors | 100.0 | |

| Web and Digital Interface Designers | 100.0 | |

| Humans labelled 15 occupations as ‘fully exposed’. |

| Model ζ | Accountants and Auditors | 100.0 |

| News Analysts, Reporters and Journalists | 100.0 | |

| Legal Secretaries and Administrative Assistants | 100.0 | |

| Clinical Data Managers | 100.0 | |

| Climate Change Policy Analysts | 100.0 | |

| The model labelled 86 occupations as ‘fully exposed’. |

| Highest variance | Search Marketing Strategists | 14.5 |

| Graphic Designers | 13.4 | |

| Investment Fund Managers | 13.0 | |

| Financial Managers | 13.0 | |

| Insurance Appraisers, Auto Damage | 12.6 |

Source: University of Pennsylvania, as at March 2023.

One hypothesis could be that professional workers experience an elephant curve as shown here for the global change in real income between 1988 and 2008.

Highly productive workers will leverage AI to make themselves even more productive and therefore share in the productivity gains.

A long tail of previously lower-skilled, lower-productivity workers will receive a productivity boost and associated increase in income. Think about the junior doctor example earlier and the potential for diagnostic expertise to be democratised further into the army of nurses.

Then we could have a squeezed middle of ‘average’ professionals unable to upskill who will see a core part of their skillset commoditised, which will be challenging for income growth, at best. These are workers who will likely need to retrain.

Figure 9: Change in real income from 1988 to 2008

Source: ResearchGate.net

Ethical and regulatory implications

While offering huge promise in countless applications, the risk that AI might be used for more nefarious activities means that it will need a social license to operate. For this to happen, AI needs to comply with applicable legislation and regulation. The primary ethical considerations become:

- AI needs to be robust and safe

- Privacy and data governance rules need to be complied with

- Transparency

- Non-discriminatory

- Accountability

- Elon Musk, 2018

As it stands, while legislators are increasingly AI aware, the pace of policy is likely to lag the pace of technological development. An index of AI-related legislative records across 25 countries shows that the number of bills containing ‘artificial intelligence’ that have been passed has grown from one in 2016 to 18 by 2021. The EU published a white paper in 2020 proposing a regulatory framework for AI setting out obligations on developers, deployers and users. It contains three broad areas of focus: ethics, civil liability and intellectual property.

This EU framework is the most exhaustive one to date and shows how AI is likely to be policed. It is a risk-based framework and identifies areas believed to be particularly high risk. This includes major areas like transport, health, education and more specific guidance around sorting CVs and credit scoring. We know that humans can be biased, early studies show that AI accentuates those biases. Therefore, some applications of AI are likely to be prohibited entirely where negative outcomes cannot be sufficiently mitigated.

What is clear is that regulatory interest is rising.

Figure 10: EU Artificial Intelligence Act: risk levels

Source: European Commission

Concluding remarks

After a long history of stop and start, are we on the brink of one of those huge advances for humankind that changes everything? Akin to say electricity.

We should, however, acknowledge that huge advances in a general purpose tool like artificial intelligence can take years to work through the economy. As was the case with electricity.

Important new developments in how machines learn and the computing power they can access has created huge excitement about potential applications of AI. It is also why investment in the technology has been growing at an average annual rate of 30%1.

Figure 11: The generative AI application landscape

| Text | Code | Image | Speech | Video | 3D | Other | |

|---|---|---|---|---|---|---|---|

Application layer |

Marketing (content) | ||||||

| Sales (email) | Gaming | ||||||

| Support (chat/email) | Code generation | Image generation | RPA | ||||

| General writing | Code documentation | Consumer/Social | Music | ||||

| Note taking | Text to SQL | Media/Advertising | Audio | ||||

| Other | Web app builders | Design | Voice synthesis | Video editing/generation | 3D models/scenes | Biology and chemistry | |

Model layer |

OpenAI GPT-3 | OpenAI GPT-3 | OpenAI DALL·E 2 | OpenAI | Microsoft X-CLIP | DreamFusion | TBD |

| DeepMind Gopher | Tabnine | Stable Diffusion | Meta Make-A-Video | NVIDIA GET3D | |||

| Facebook OPT | Stability.ai | Craiyon | MDM | ||||

| Hugging Face Bloom | |||||||

| Cohere | |||||||

| Anthropic | |||||||

| AI2 | |||||||

| Alibaba, Yandex, etc. |

Source: Sequoia Capital, as at September 2022.

As investors, the long-term winners are difficult to identify. However, our initial thinking is to analyse the key players providing the infrastructure at the bottom of the AI stack including those companies in their supply chains. At this stage it forms one of the cleaner ways to invest in the AI trend. As was the case with the advent of software, winners in the application layer emerged over time. The largest value pools are likely to be found here and early identification of those winners, without the need to be speculative, is likely to be fruitful from an investment perspective.

Macro impacts are likely to be significant leading to polarisation of the labour market. We can be relatively certain that AI will generate substantial productivity gains and economic growth. But those productivity gains can also be deflationary particularly for those industries achieving the best gains and therefore AI may underwhelm in GDP terms.

Regulation will need to catch up and may well determine the speed and success AI enjoys in different parts of the world. Another dimension for investors to monitor closely.

1 Source: Stanford’s AI Index Report.

Authored by

Explore more insights

Insights

Market and portfolio insights, webinars & events curated from across our investment teams to help you steer through changing investment landscapes.

Important Information

This communication is provided for general information only should not be construed as advice.

All the information in is believed to be reliable but may be inaccurate or incomplete. The views are those of the contributor at the time of publication and do not necessary reflect those of Ninety One.

Any opinions stated are honestly held but are not guaranteed and should not be relied upon.

All rights reserved. Issued by Ninety One.